It’s been sweltering in the DC area. For most of the past week, we’ve had a “heat dome” over the region, meaning it’s gotten to around 100F (37.7C) multiple days. Coupled with high humidity—it’s been a rainy summer so far—that means I’ve been running my AC regularly. It is summer, after all.

In past years, back in Kansas City, I would sometimes turn off my server (!) in order to save on electricity costs.

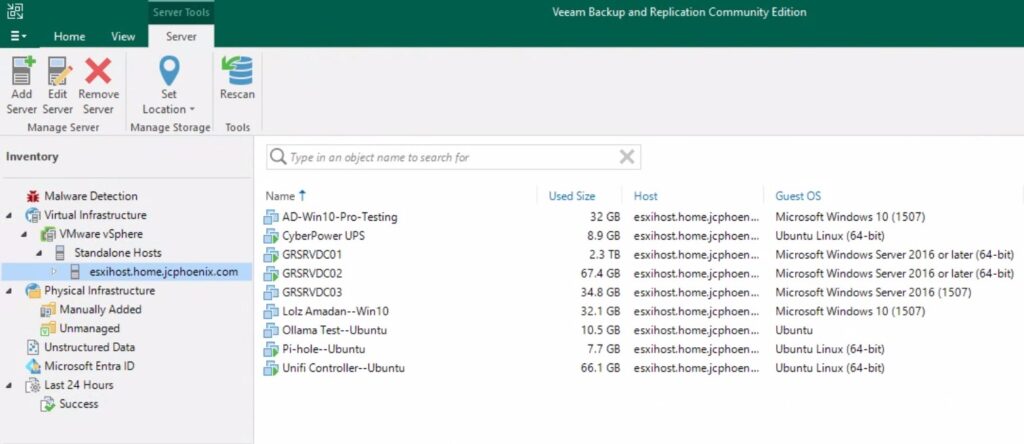

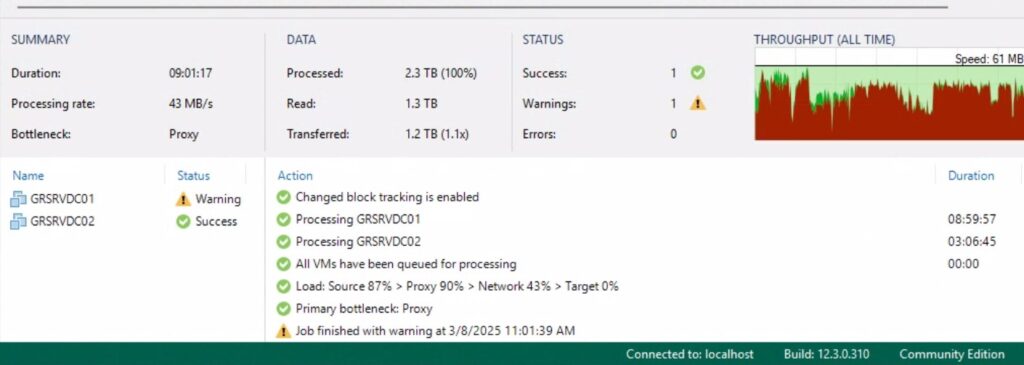

Let’s face it: I don’t do that much with my server. Yeah, it hosts my DCs, long-term storage, and some other services, but the reality is that I don’t really touch it that much. The computers I use regularly aren’t even domain-joined. I rarely go through my old music and movies/shows collections. And some of the services that used to be hosted on my server have been taken over by other devices.

On that last point, two come to mind: the Unifi Controller and WireGuard VPN. Unifi is now hosted on my Cloud Gateway Ultra. I had to host it in a VM when I had my old USG in place, but the UCG completely replaced that.

The same goes for Wireguard. I mentioned in Homelab Chronicles 11 that I set up WG-Easy within a VM. About a month ago, however, I set up Unifi Identity and a Wireguard server in the Controller. I’ve been testing that out with some of my devices and it’s been great. I have a couple of other devices where I need to switch the WG config files out, but once that’s done, I can sunset WG-Easy.

Related to hosting a VPN, is making sure Dynamic DNS is set up and working. I spoke about this in Homelab Chronicles 09. No matter where my VPN server is here at home, I need to know the IP address. With a dynamic, albeit “sticky,” IP address, the easiest way to do this is with a domain and DDNS.

Many home routers have a DDNS client built-in, and Unifi gateways are no different here. And there are plenty of registrars and DDNS services it can work with. My domain is hosted at Namecheap, which is one of the services Unifi can work with. Unfortunately, I point it to nameservers at Dreamhost. That’s where this blog is hosted!

I could move the DNS back to Namecheap, but with this site and email, I’d rather not. So what to do? I want to stop doing DDNS from my server, but don’t want to move the domain’s DNS to a compatible service.

Well, there’s where money comes into play. Yes, that’s right, cold hard cash to the rescue.

I bought a new domain at Cloudflare. Spent a whole $7.50, which rises to $11/yr after the first year.

Afterwards, I put the domain details and API key into Unifi. Then about a minute later, I checked the DNS at Cloudflare, and behold! My home IP address as an A Record!

I still need to update the existing individual WG config files on each device by changing the server address to my new domain. Then I need to test it by seeing if I can connect. But I’m not expecting any issues; I’ve done this before a few times with work VPN config files.

Once I’ve confirmed everything is working, I can turn off WG-Easy and stop my current DDNS cronjob. And that should be the last external facing service. I can power down my server until I need it again.

Anyway, that’s it. Nothing super technical today. My point with this post is that sometimes spending a little money can be the quickest solution. I probably could’ve spent a couple hours working on trying something else. Like moving the DNS isn’t difficult, but it can be tedious and delicate, especially with DNS propagation. With long TTLs, it can potentially be awhile to see if the migration worked properly.

But spending about $10/yr will have saved me a decent amount of time and effort. I can’t always spend money to solve problems. Some solutions might be too costly. Though this is a case where it made the most sense.

Lastly, I decided to go with Cloudflare instead of Namecheap, where my other domains are hosted, mainly because I’ve never used Cloudflare before. I know Cloudflare provides some DDOS protections, but I’m not really expecting to ever have to utilize those. It was also slightly easier to configure in Unifi and it was cheaper.

OK, time to test.